mirror of https://github.com/Mai-with-u/MaiBot.git

Merge branch 'debug' into Willing_cycles_and_better_response

commit

50db5e2ede

|

|

@ -0,0 +1,8 @@

|

|||

name: Ruff

|

||||

on: [ push, pull_request ]

|

||||

jobs:

|

||||

ruff:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: astral-sh/ruff-action@v3

|

||||

|

|

@ -190,7 +190,6 @@ cython_debug/

|

|||

|

||||

# PyPI configuration file

|

||||

.pypirc

|

||||

.env

|

||||

|

||||

# jieba

|

||||

jieba.cache

|

||||

|

|

@ -199,4 +198,9 @@ jieba.cache

|

|||

!.vscode/settings.json

|

||||

|

||||

# direnv

|

||||

/.direnv

|

||||

/.direnv

|

||||

|

||||

# JetBrains

|

||||

.idea

|

||||

*.iml

|

||||

*.ipr

|

||||

|

|

|

|||

|

|

@ -0,0 +1,10 @@

|

|||

repos:

|

||||

- repo: https://github.com/astral-sh/ruff-pre-commit

|

||||

# Ruff version.

|

||||

rev: v0.9.10

|

||||

hooks:

|

||||

# Run the linter.

|

||||

- id: ruff

|

||||

args: [ --fix ]

|

||||

# Run the formatter.

|

||||

- id: ruff-format

|

||||

|

|

@ -61,6 +61,7 @@

|

|||

|

||||

- 📦 **Windows 一键傻瓜式部署**:请运行项目根目录中的 `run.bat`,部署完成后请参照后续配置指南进行配置

|

||||

|

||||

- 📦 Linux 自动部署(实验) :请下载并运行项目根目录中的`run.sh`并按照提示安装,部署完成后请参照后续配置指南进行配置

|

||||

|

||||

- [📦 Windows 手动部署指南 ](docs/manual_deploy_windows.md)

|

||||

|

||||

|

|

|

|||

99

bot.py

99

bot.py

|

|

@ -12,26 +12,11 @@ from loguru import logger

|

|||

from nonebot.adapters.onebot.v11 import Adapter

|

||||

import platform

|

||||

|

||||

from src.common.database import Database

|

||||

|

||||

# 获取没有加载env时的环境变量

|

||||

env_mask = {key: os.getenv(key) for key in os.environ}

|

||||

|

||||

uvicorn_server = None

|

||||

|

||||

# 配置日志

|

||||

log_path = os.path.join(os.getcwd(), "logs")

|

||||

if not os.path.exists(log_path):

|

||||

os.makedirs(log_path)

|

||||

|

||||

# 添加文件日志,启用rotation和retention

|

||||

logger.add(

|

||||

os.path.join(log_path, "maimbot_{time:YYYY-MM-DD}.log"),

|

||||

rotation="00:00", # 每天0点创建新文件

|

||||

retention="30 days", # 保留30天的日志

|

||||

level="INFO",

|

||||

encoding="utf-8"

|

||||

)

|

||||

|

||||

def easter_egg():

|

||||

# 彩蛋

|

||||

|

|

@ -78,7 +63,7 @@ def init_env():

|

|||

|

||||

# 首先加载基础环境变量.env

|

||||

if os.path.exists(".env"):

|

||||

load_dotenv(".env",override=True)

|

||||

load_dotenv(".env", override=True)

|

||||

logger.success("成功加载基础环境变量配置")

|

||||

|

||||

|

||||

|

|

@ -92,10 +77,7 @@ def load_env():

|

|||

logger.success("加载开发环境变量配置")

|

||||

load_dotenv(".env.dev", override=True) # override=True 允许覆盖已存在的环境变量

|

||||

|

||||

fn_map = {

|

||||

"prod": prod,

|

||||

"dev": dev

|

||||

}

|

||||

fn_map = {"prod": prod, "dev": dev}

|

||||

|

||||

env = os.getenv("ENVIRONMENT")

|

||||

logger.info(f"[load_env] 当前的 ENVIRONMENT 变量值:{env}")

|

||||

|

|

@ -111,40 +93,45 @@ def load_env():

|

|||

logger.error(f"ENVIRONMENT 配置错误,请检查 .env 文件中的 ENVIRONMENT 变量及对应 .env.{env} 是否存在")

|

||||

RuntimeError(f"ENVIRONMENT 配置错误,请检查 .env 文件中的 ENVIRONMENT 变量及对应 .env.{env} 是否存在")

|

||||

|

||||

def init_database():

|

||||

Database.initialize(

|

||||

uri=os.getenv("MONGODB_URI"),

|

||||

host=os.getenv("MONGODB_HOST", "127.0.0.1"),

|

||||

port=int(os.getenv("MONGODB_PORT", "27017")),

|

||||

db_name=os.getenv("DATABASE_NAME", "MegBot"),

|

||||

username=os.getenv("MONGODB_USERNAME"),

|

||||

password=os.getenv("MONGODB_PASSWORD"),

|

||||

auth_source=os.getenv("MONGODB_AUTH_SOURCE"),

|

||||

)

|

||||

|

||||

|

||||

def load_logger():

|

||||

logger.remove() # 移除默认配置

|

||||

if os.getenv("ENVIRONMENT") == "dev":

|

||||

logger.add(

|

||||

sys.stderr,

|

||||

format="<green>{time:YYYY-MM-DD HH:mm:ss.SSS}</green> <fg #777777>|</> <level>{level: <7}</level> <fg "

|

||||

"#777777>|</> <cyan>{name:.<8}</cyan>:<cyan>{function:.<8}</cyan>:<cyan>{line: >4}</cyan> <fg "

|

||||

"#777777>-</> <level>{message}</level>",

|

||||

colorize=True,

|

||||

level=os.getenv("LOG_LEVEL", "DEBUG"), # 根据环境设置日志级别,默认为DEBUG

|

||||

)

|

||||

else:

|

||||

logger.add(

|

||||

sys.stderr,

|

||||

format="<green>{time:YYYY-MM-DD HH:mm:ss.SSS}</green> <fg #777777>|</> <level>{level: <7}</level> <fg "

|

||||

"#777777>|</> <cyan>{name:.<8}</cyan>:<cyan>{function:.<8}</cyan>:<cyan>{line: >4}</cyan> <fg "

|

||||

"#777777>-</> <level>{message}</level>",

|

||||

colorize=True,

|

||||

level=os.getenv("LOG_LEVEL", "INFO"), # 根据环境设置日志级别,默认为INFO

|

||||

filter=lambda record: "nonebot" not in record["name"]

|

||||

)

|

||||

logger.remove()

|

||||

|

||||

# 配置日志基础路径

|

||||

log_path = os.path.join(os.getcwd(), "logs")

|

||||

if not os.path.exists(log_path):

|

||||

os.makedirs(log_path)

|

||||

|

||||

current_env = os.getenv("ENVIRONMENT", "dev")

|

||||

|

||||

# 公共配置参数

|

||||

log_level = os.getenv("LOG_LEVEL", "INFO" if current_env == "prod" else "DEBUG")

|

||||

log_filter = lambda record: (

|

||||

("nonebot" not in record["name"] or record["level"].no >= logger.level("ERROR").no)

|

||||

if current_env == "prod"

|

||||

else True

|

||||

)

|

||||

log_format = (

|

||||

"<green>{time:YYYY-MM-DD HH:mm:ss.SSS}</green> "

|

||||

"<fg #777777>|</> <level>{level: <7}</level> "

|

||||

"<fg #777777>|</> <cyan>{name:.<8}</cyan>:<cyan>{function:.<8}</cyan>:<cyan>{line: >4}</cyan> "

|

||||

"<fg #777777>-</> <level>{message}</level>"

|

||||

)

|

||||

|

||||

# 日志文件储存至/logs

|

||||

logger.add(

|

||||

os.path.join(log_path, "maimbot_{time:YYYY-MM-DD}.log"),

|

||||

rotation="00:00",

|

||||

retention="30 days",

|

||||

format=log_format,

|

||||

colorize=False,

|

||||

level=log_level,

|

||||

filter=log_filter,

|

||||

encoding="utf-8",

|

||||

)

|

||||

|

||||

# 终端输出

|

||||

logger.add(sys.stderr, format=log_format, colorize=True, level=log_level, filter=log_filter)

|

||||

|

||||

|

||||

def scan_provider(env_config: dict):

|

||||

|

|

@ -174,10 +161,7 @@ def scan_provider(env_config: dict):

|

|||

# 检查每个 provider 是否同时存在 url 和 key

|

||||

for provider_name, config in provider.items():

|

||||

if config["url"] is None or config["key"] is None:

|

||||

logger.error(

|

||||

f"provider 内容:{config}\n"

|

||||

f"env_config 内容:{env_config}"

|

||||

)

|

||||

logger.error(f"provider 内容:{config}\nenv_config 内容:{env_config}")

|

||||

raise ValueError(f"请检查 '{provider_name}' 提供商配置是否丢失 BASE_URL 或 KEY 环境变量")

|

||||

|

||||

|

||||

|

|

@ -206,7 +190,7 @@ async def uvicorn_main():

|

|||

reload=os.getenv("ENVIRONMENT") == "dev",

|

||||

timeout_graceful_shutdown=5,

|

||||

log_config=None,

|

||||

access_log=False

|

||||

access_log=False,

|

||||

)

|

||||

server = uvicorn.Server(config)

|

||||

uvicorn_server = server

|

||||

|

|

@ -216,14 +200,13 @@ async def uvicorn_main():

|

|||

def raw_main():

|

||||

# 利用 TZ 环境变量设定程序工作的时区

|

||||

# 仅保证行为一致,不依赖 localtime(),实际对生产环境几乎没有作用

|

||||

if platform.system().lower() != 'windows':

|

||||

if platform.system().lower() != "windows":

|

||||

time.tzset()

|

||||

|

||||

easter_egg()

|

||||

init_config()

|

||||

init_env()

|

||||

load_env()

|

||||

init_database() # 加载完成环境后初始化database

|

||||

load_logger()

|

||||

|

||||

env_config = {key: os.getenv(key) for key in os.environ}

|

||||

|

|

|

|||

Binary file not shown.

|

After Width: | Height: | Size: 20 KiB |

Binary file not shown.

|

After Width: | Height: | Size: 36 KiB |

|

|

@ -0,0 +1 @@

|

|||

gource gource.log --user-image-dir docs/avatars/ --default-user-image docs/avatars/default.png

|

||||

|

|

@ -121,6 +121,7 @@ sudo nano /etc/systemd/system/maimbot.service

|

|||

输入以下内容:

|

||||

|

||||

`<maimbot_directory>`:你的maimbot目录

|

||||

|

||||

`<venv_directory>`:你的venv环境(就是上文创建环境后,执行的代码`source maimbot/bin/activate`中source后面的路径的绝对路径)

|

||||

|

||||

```ini

|

||||

|

|

|

|||

Binary file not shown.

|

After Width: | Height: | Size: 107 KiB |

Binary file not shown.

|

After Width: | Height: | Size: 208 KiB |

|

|

@ -0,0 +1,67 @@

|

|||

# 群晖 NAS 部署指南

|

||||

|

||||

**笔者使用的是 DSM 7.2.2,其他 DSM 版本的操作可能不完全一样**

|

||||

**需要使用 Container Manager,群晖的部分部分入门级 NAS 可能不支持**

|

||||

|

||||

## 部署步骤

|

||||

|

||||

### 创建配置文件目录

|

||||

|

||||

打开 `DSM ➡️ 控制面板 ➡️ 共享文件夹`,点击 `新增` ,创建一个共享文件夹

|

||||

只需要设置名称,其他设置均保持默认即可。如果你已经有 docker 专用的共享文件夹了,就跳过这一步

|

||||

|

||||

打开 `DSM ➡️ FileStation`, 在共享文件夹中创建一个 `MaiMBot` 文件夹

|

||||

|

||||

### 准备配置文件

|

||||

|

||||

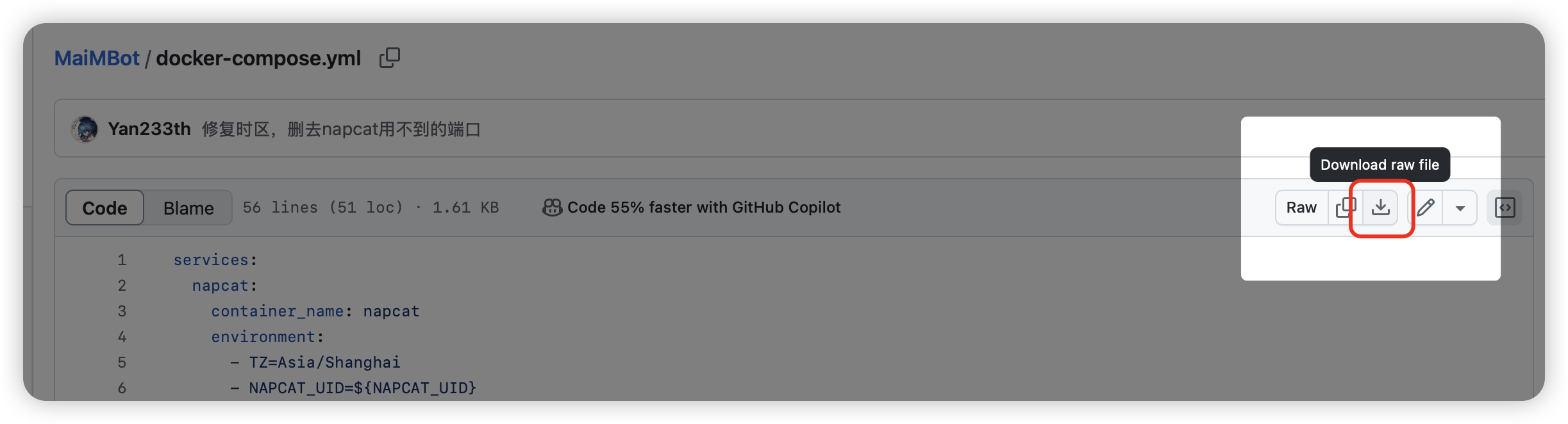

docker-compose.yml: https://github.com/SengokuCola/MaiMBot/blob/main/docker-compose.yml

|

||||

下载后打开,将 `services-mongodb-image` 修改为 `mongo:4.4.24`。这是因为最新的 MongoDB 强制要求 AVX 指令集,而群晖似乎不支持这个指令集

|

||||

|

||||

|

||||

bot_config.toml: https://github.com/SengokuCola/MaiMBot/blob/main/template/bot_config_template.toml

|

||||

下载后,重命名为 `bot_config.toml`

|

||||

打开它,按自己的需求填写配置文件

|

||||

|

||||

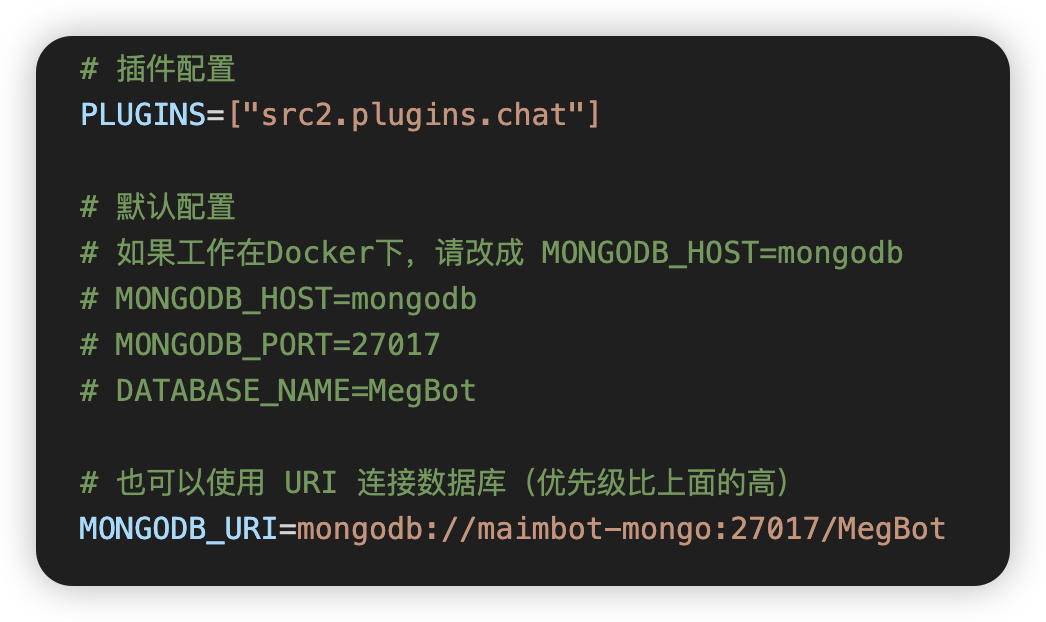

.env.prod: https://github.com/SengokuCola/MaiMBot/blob/main/template.env

|

||||

下载后,重命名为 `.env.prod`

|

||||

按下图修改 mongodb 设置,使用 `MONGODB_URI`

|

||||

|

||||

|

||||

把 `bot_config.toml` 和 `.env.prod` 放入之前创建的 `MaiMBot`文件夹

|

||||

|

||||

#### 如何下载?

|

||||

|

||||

点这里!

|

||||

|

||||

### 创建项目

|

||||

|

||||

打开 `DSM ➡️ ContainerManager ➡️ 项目`,点击 `新增` 创建项目,填写以下内容:

|

||||

|

||||

- 项目名称: `maimbot`

|

||||

- 路径:之前创建的 `MaiMBot` 文件夹

|

||||

- 来源: `上传 docker-compose.yml`

|

||||

- 文件:之前下载的 `docker-compose.yml` 文件

|

||||

|

||||

图例:

|

||||

|

||||

|

||||

|

||||

一路点下一步,等待项目创建完成

|

||||

|

||||

### 设置 Napcat

|

||||

|

||||

1. 登陆 napcat

|

||||

打开 napcat: `http://<你的nas地址>:6099` ,输入token登陆

|

||||

token可以打开 `DSM ➡️ ContainerManager ➡️ 项目 ➡️ MaiMBot ➡️ 容器 ➡️ Napcat ➡️ 日志`,找到类似 `[WebUi] WebUi Local Panel Url: http://127.0.0.1:6099/webui?token=xxxx` 的日志

|

||||

这个 `token=` 后面的就是你的 napcat token

|

||||

|

||||

2. 按提示,登陆你给麦麦准备的QQ小号

|

||||

|

||||

3. 设置 websocket 客户端

|

||||

`网络配置 -> 新建 -> Websocket客户端`,名称自定,URL栏填入 `ws://maimbot:8080/onebot/v11/ws`,启用并保存即可。

|

||||

若修改过容器名称,则替换 `maimbot` 为你自定的名称

|

||||

|

||||

### 部署完成

|

||||

|

||||

找个群,发送 `麦麦,你在吗` 之类的

|

||||

如果一切正常,应该能正常回复了

|

||||

Binary file not shown.

|

After Width: | Height: | Size: 170 KiB |

Binary file not shown.

|

After Width: | Height: | Size: 133 KiB |

|

|

@ -0,0 +1,278 @@

|

|||

#!/bin/bash

|

||||

|

||||

# Maimbot 一键安装脚本 by Cookie987

|

||||

# 适用于Debian系

|

||||

# 请小心使用任何一键脚本!

|

||||

|

||||

# 如无法访问GitHub请修改此处镜像地址

|

||||

|

||||

LANG=C.UTF-8

|

||||

|

||||

GITHUB_REPO="https://ghfast.top/https://github.com/SengokuCola/MaiMBot.git"

|

||||

|

||||

# 颜色输出

|

||||

GREEN="\e[32m"

|

||||

RED="\e[31m"

|

||||

RESET="\e[0m"

|

||||

|

||||

# 需要的基本软件包

|

||||

REQUIRED_PACKAGES=("git" "sudo" "python3" "python3-venv" "curl" "gnupg" "python3-pip")

|

||||

|

||||

# 默认项目目录

|

||||

DEFAULT_INSTALL_DIR="/opt/maimbot"

|

||||

|

||||

# 服务名称

|

||||

SERVICE_NAME="maimbot"

|

||||

|

||||

IS_INSTALL_MONGODB=false

|

||||

IS_INSTALL_NAPCAT=false

|

||||

|

||||

# 1/6: 检测是否安装 whiptail

|

||||

if ! command -v whiptail &>/dev/null; then

|

||||

echo -e "${RED}[1/6] whiptail 未安装,正在安装...${RESET}"

|

||||

apt update && apt install -y whiptail

|

||||

fi

|

||||

|

||||

get_os_info() {

|

||||

if command -v lsb_release &>/dev/null; then

|

||||

OS_INFO=$(lsb_release -d | cut -f2)

|

||||

elif [[ -f /etc/os-release ]]; then

|

||||

OS_INFO=$(grep "^PRETTY_NAME=" /etc/os-release | cut -d '"' -f2)

|

||||

else

|

||||

OS_INFO="Unknown OS"

|

||||

fi

|

||||

echo "$OS_INFO"

|

||||

}

|

||||

|

||||

# 检查系统

|

||||

check_system() {

|

||||

# 检查是否为 root 用户

|

||||

if [[ "$(id -u)" -ne 0 ]]; then

|

||||

whiptail --title "🚫 权限不足" --msgbox "请使用 root 用户运行此脚本!\n执行方式: sudo bash $0" 10 60

|

||||

exit 1

|

||||

fi

|

||||

|

||||

if [[ -f /etc/os-release ]]; then

|

||||

source /etc/os-release

|

||||

if [[ "$ID" != "debian" || "$VERSION_ID" != "12" ]]; then

|

||||

whiptail --title "🚫 不支持的系统" --msgbox "此脚本仅支持 Debian 12 (Bookworm)!\n当前系统: $PRETTY_NAME\n安装已终止。" 10 60

|

||||

exit 1

|

||||

fi

|

||||

else

|

||||

whiptail --title "⚠️ 无法检测系统" --msgbox "无法识别系统版本,安装已终止。" 10 60

|

||||

exit 1

|

||||

fi

|

||||

}

|

||||

|

||||

# 3/6: 询问用户是否安装缺失的软件包

|

||||

install_packages() {

|

||||

missing_packages=()

|

||||

for package in "${REQUIRED_PACKAGES[@]}"; do

|

||||

if ! dpkg -s "$package" &>/dev/null; then

|

||||

missing_packages+=("$package")

|

||||

fi

|

||||

done

|

||||

|

||||

if [[ ${#missing_packages[@]} -gt 0 ]]; then

|

||||

whiptail --title "📦 [3/6] 软件包检查" --yesno "检测到以下必须的依赖项目缺失:\n${missing_packages[*]}\n\n是否要自动安装?" 12 60

|

||||

if [[ $? -eq 0 ]]; then

|

||||

return 0

|

||||

else

|

||||

whiptail --title "⚠️ 注意" --yesno "某些必要的依赖项未安装,可能会影响运行!\n是否继续?" 10 60 || exit 1

|

||||

fi

|

||||

fi

|

||||

}

|

||||

|

||||

# 4/6: Python 版本检查

|

||||

check_python() {

|

||||

PYTHON_VERSION=$(python3 -c 'import sys; print(f"{sys.version_info.major}.{sys.version_info.minor}")')

|

||||

|

||||

python3 -c "import sys; exit(0) if sys.version_info >= (3,9) else exit(1)"

|

||||

if [[ $? -ne 0 ]]; then

|

||||

whiptail --title "⚠️ [4/6] Python 版本过低" --msgbox "检测到 Python 版本为 $PYTHON_VERSION,需要 3.9 或以上!\n请升级 Python 后重新运行本脚本。" 10 60

|

||||

exit 1

|

||||

fi

|

||||

}

|

||||

|

||||

# 5/6: 选择分支

|

||||

choose_branch() {

|

||||

BRANCH=$(whiptail --title "🔀 [5/6] 选择 Maimbot 分支" --menu "请选择要安装的 Maimbot 分支:" 15 60 2 \

|

||||

"main" "稳定版本(推荐)" \

|

||||

"debug" "开发版本(可能不稳定)" 3>&1 1>&2 2>&3)

|

||||

|

||||

if [[ -z "$BRANCH" ]]; then

|

||||

BRANCH="main"

|

||||

whiptail --title "🔀 默认选择" --msgbox "未选择分支,默认安装稳定版本(main)" 10 60

|

||||

fi

|

||||

}

|

||||

|

||||

# 6/6: 选择安装路径

|

||||

choose_install_dir() {

|

||||

INSTALL_DIR=$(whiptail --title "📂 [6/6] 选择安装路径" --inputbox "请输入 Maimbot 的安装目录:" 10 60 "$DEFAULT_INSTALL_DIR" 3>&1 1>&2 2>&3)

|

||||

|

||||

if [[ -z "$INSTALL_DIR" ]]; then

|

||||

whiptail --title "⚠️ 取消输入" --yesno "未输入安装路径,是否退出安装?" 10 60

|

||||

if [[ $? -ne 0 ]]; then

|

||||

INSTALL_DIR="$DEFAULT_INSTALL_DIR"

|

||||

else

|

||||

exit 1

|

||||

fi

|

||||

fi

|

||||

}

|

||||

|

||||

# 显示确认界面

|

||||

confirm_install() {

|

||||

local confirm_message="请确认以下更改:\n\n"

|

||||

|

||||

if [[ ${#missing_packages[@]} -gt 0 ]]; then

|

||||

confirm_message+="📦 安装缺失的依赖项: ${missing_packages[*]}\n"

|

||||

else

|

||||

confirm_message+="✅ 所有依赖项已安装\n"

|

||||

fi

|

||||

|

||||

confirm_message+="📂 安装麦麦Bot到: $INSTALL_DIR\n"

|

||||

confirm_message+="🔀 分支: $BRANCH\n"

|

||||

|

||||

if [[ "$MONGODB_INSTALLED" == "true" ]]; then

|

||||

confirm_message+="✅ MongoDB 已安装\n"

|

||||

else

|

||||

if [[ "$IS_INSTALL_MONGODB" == "true" ]]; then

|

||||

confirm_message+="📦 安装 MongoDB\n"

|

||||

fi

|

||||

fi

|

||||

|

||||

if [[ "$NAPCAT_INSTALLED" == "true" ]]; then

|

||||

confirm_message+="✅ NapCat 已安装\n"

|

||||

else

|

||||

if [[ "$IS_INSTALL_NAPCAT" == "true" ]]; then

|

||||

confirm_message+="📦 安装 NapCat\n"

|

||||

fi

|

||||

fi

|

||||

|

||||

confirm_message+="🛠️ 添加麦麦Bot作为系统服务 ($SERVICE_NAME.service)\n"

|

||||

|

||||

confitm_message+="\n\n注意:本脚本默认使用ghfast.top为GitHub进行加速,如不想使用请手动修改脚本开头的GITHUB_REPO变量。"

|

||||

whiptail --title "🔧 安装确认" --yesno "$confirm_message\n\n是否继续安装?" 15 60

|

||||

if [[ $? -ne 0 ]]; then

|

||||

whiptail --title "🚫 取消安装" --msgbox "安装已取消。" 10 60

|

||||

exit 1

|

||||

fi

|

||||

}

|

||||

|

||||

check_mongodb() {

|

||||

if command -v mongod &>/dev/null; then

|

||||

MONGO_INSTALLED=true

|

||||

else

|

||||

MONGO_INSTALLED=false

|

||||

fi

|

||||

}

|

||||

|

||||

# 安装 MongoDB

|

||||

install_mongodb() {

|

||||

if [[ "$MONGO_INSTALLED" == "true" ]]; then

|

||||

return 0

|

||||

fi

|

||||

|

||||

whiptail --title "📦 [3/6] 软件包检查" --yesno "检测到未安装MongoDB,是否安装?\n如果您想使用远程数据库,请跳过此步。" 10 60

|

||||

if [[ $? -ne 0 ]]; then

|

||||

return 1

|

||||

fi

|

||||

IS_INSTALL_MONGODB=true

|

||||

}

|

||||

|

||||

check_napcat() {

|

||||

if command -v napcat &>/dev/null; then

|

||||

NAPCAT_INSTALLED=true

|

||||

else

|

||||

NAPCAT_INSTALLED=false

|

||||

fi

|

||||

}

|

||||

|

||||

install_napcat() {

|

||||

if [[ "$NAPCAT_INSTALLED" == "true" ]]; then

|

||||

return 0

|

||||

fi

|

||||

|

||||

whiptail --title "📦 [3/6] 软件包检查" --yesno "检测到未安装NapCat,是否安装?\n如果您想使用远程NapCat,请跳过此步。" 10 60

|

||||

if [[ $? -ne 0 ]]; then

|

||||

return 1

|

||||

fi

|

||||

IS_INSTALL_NAPCAT=true

|

||||

}

|

||||

|

||||

# 运行安装步骤

|

||||

check_system

|

||||

check_mongodb

|

||||

check_napcat

|

||||

install_packages

|

||||

install_mongodb

|

||||

install_napcat

|

||||

check_python

|

||||

choose_branch

|

||||

choose_install_dir

|

||||

confirm_install

|

||||

|

||||

# 开始安装

|

||||

whiptail --title "🚀 开始安装" --msgbox "所有环境检查完毕,即将开始安装麦麦Bot!" 10 60

|

||||

|

||||

echo -e "${GREEN}安装依赖项...${RESET}"

|

||||

|

||||

apt update && apt install -y "${missing_packages[@]}"

|

||||

|

||||

|

||||

if [[ "$IS_INSTALL_MONGODB" == "true" ]]; then

|

||||

echo -e "${GREEN}安装 MongoDB...${RESET}"

|

||||

curl -fsSL https://www.mongodb.org/static/pgp/server-8.0.asc | gpg -o /usr/share/keyrings/mongodb-server-8.0.gpg --dearmor

|

||||

echo "deb [ signed-by=/usr/share/keyrings/mongodb-server-8.0.gpg ] http://repo.mongodb.org/apt/debian bookworm/mongodb-org/8.0 main" | sudo tee /etc/apt/sources.list.d/mongodb-org-8.0.list

|

||||

apt-get update

|

||||

apt-get install -y mongodb-org

|

||||

|

||||

systemctl enable mongod

|

||||

systemctl start mongod

|

||||

fi

|

||||

|

||||

if [[ "$IS_INSTALL_NAPCAT" == "true" ]]; then

|

||||

echo -e "${GREEN}安装 NapCat...${RESET}"

|

||||

curl -o napcat.sh https://nclatest.znin.net/NapNeko/NapCat-Installer/main/script/install.sh && bash napcat.sh

|

||||

fi

|

||||

|

||||

echo -e "${GREEN}创建 Python 虚拟环境...${RESET}"

|

||||

mkdir -p "$INSTALL_DIR"

|

||||

cd "$INSTALL_DIR" || exit

|

||||

python3 -m venv venv

|

||||

source venv/bin/activate

|

||||

|

||||

echo -e "${GREEN}克隆仓库...${RESET}"

|

||||

# 安装 Maimbot

|

||||

mkdir -p "$INSTALL_DIR/repo"

|

||||

cd "$INSTALL_DIR/repo" || exit 1

|

||||

git clone -b "$BRANCH" $GITHUB_REPO .

|

||||

|

||||

echo -e "${GREEN}安装 Python 依赖...${RESET}"

|

||||

pip install -r requirements.txt

|

||||

|

||||

echo -e "${GREEN}设置服务...${RESET}"

|

||||

|

||||

# 设置 Maimbot 服务

|

||||

cat <<EOF | tee /etc/systemd/system/$SERVICE_NAME.service

|

||||

[Unit]

|

||||

Description=MaiMbot 麦麦

|

||||

After=network.target mongod.service

|

||||

|

||||

[Service]

|

||||

Type=simple

|

||||

WorkingDirectory=$INSTALL_DIR/repo/

|

||||

ExecStart=$INSTALL_DIR/venv/bin/python3 bot.py

|

||||

ExecStop=/bin/kill -2 $MAINPID

|

||||

Restart=always

|

||||

RestartSec=10s

|

||||

|

||||

[Install]

|

||||

WantedBy=multi-user.target

|

||||

EOF

|

||||

|

||||

systemctl daemon-reload

|

||||

systemctl enable maimbot

|

||||

systemctl start maimbot

|

||||

|

||||

whiptail --title "🎉 安装完成" --msgbox "麦麦Bot安装完成!\n已经启动麦麦Bot服务。\n\n安装路径: $INSTALL_DIR\n分支: $BRANCH" 12 60

|

||||

|

|

@ -1,73 +1,51 @@

|

|||

from typing import Optional

|

||||

import os

|

||||

from typing import cast

|

||||

from pymongo import MongoClient

|

||||

from pymongo.database import Database as MongoDatabase

|

||||

from pymongo.database import Database

|

||||

|

||||

class Database:

|

||||

_instance: Optional["Database"] = None

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

host: str,

|

||||

port: int,

|

||||

db_name: str,

|

||||

username: Optional[str] = None,

|

||||

password: Optional[str] = None,

|

||||

auth_source: Optional[str] = None,

|

||||

uri: Optional[str] = None,

|

||||

):

|

||||

if uri and uri.startswith("mongodb://"):

|

||||

# 优先使用URI连接

|

||||

self.client = MongoClient(uri)

|

||||

elif username and password:

|

||||

# 如果有用户名和密码,使用认证连接

|

||||

self.client = MongoClient(

|

||||

host, port, username=username, password=password, authSource=auth_source

|

||||

)

|

||||

else:

|

||||

# 否则使用无认证连接

|

||||

self.client = MongoClient(host, port)

|

||||

self.db: MongoDatabase = self.client[db_name]

|

||||

|

||||

@classmethod

|

||||

def initialize(

|

||||

cls,

|

||||

host: str,

|

||||

port: int,

|

||||

db_name: str,

|

||||

username: Optional[str] = None,

|

||||

password: Optional[str] = None,

|

||||

auth_source: Optional[str] = None,

|

||||

uri: Optional[str] = None,

|

||||

) -> MongoDatabase:

|

||||

if cls._instance is None:

|

||||

cls._instance = cls(

|

||||

host, port, db_name, username, password, auth_source, uri

|

||||

)

|

||||

return cls._instance.db

|

||||

|

||||

@classmethod

|

||||

def get_instance(cls) -> MongoDatabase:

|

||||

if cls._instance is None:

|

||||

raise RuntimeError("Database not initialized")

|

||||

return cls._instance.db

|

||||

_client = None

|

||||

_db = None

|

||||

|

||||

|

||||

#测试用

|

||||

|

||||

def get_random_group_messages(self, group_id: str, limit: int = 5):

|

||||

# 先随机获取一条消息

|

||||

random_message = list(self.db.messages.aggregate([

|

||||

{"$match": {"group_id": group_id}},

|

||||

{"$sample": {"size": 1}}

|

||||

]))[0]

|

||||

|

||||

# 获取该消息之后的消息

|

||||

subsequent_messages = list(self.db.messages.find({

|

||||

"group_id": group_id,

|

||||

"time": {"$gt": random_message["time"]}

|

||||

}).sort("time", 1).limit(limit))

|

||||

|

||||

# 将随机消息和后续消息合并

|

||||

messages = [random_message] + subsequent_messages

|

||||

|

||||

return messages

|

||||

def __create_database_instance():

|

||||

uri = os.getenv("MONGODB_URI")

|

||||

host = os.getenv("MONGODB_HOST", "127.0.0.1")

|

||||

port = int(os.getenv("MONGODB_PORT", "27017"))

|

||||

db_name = os.getenv("DATABASE_NAME", "MegBot")

|

||||

username = os.getenv("MONGODB_USERNAME")

|

||||

password = os.getenv("MONGODB_PASSWORD")

|

||||

auth_source = os.getenv("MONGODB_AUTH_SOURCE")

|

||||

|

||||

if uri and uri.startswith("mongodb://"):

|

||||

# 优先使用URI连接

|

||||

return MongoClient(uri)

|

||||

|

||||

if username and password:

|

||||

# 如果有用户名和密码,使用认证连接

|

||||

return MongoClient(host, port, username=username, password=password, authSource=auth_source)

|

||||

|

||||

# 否则使用无认证连接

|

||||

return MongoClient(host, port)

|

||||

|

||||

|

||||

def get_db():

|

||||

"""获取数据库连接实例,延迟初始化。"""

|

||||

global _client, _db

|

||||

if _client is None:

|

||||

_client = __create_database_instance()

|

||||

_db = _client[os.getenv("DATABASE_NAME", "MegBot")]

|

||||

return _db

|

||||

|

||||

|

||||

class DBWrapper:

|

||||

"""数据库代理类,保持接口兼容性同时实现懒加载。"""

|

||||

|

||||

def __getattr__(self, name):

|

||||

return getattr(get_db(), name)

|

||||

|

||||

def __getitem__(self, key):

|

||||

return get_db()[key]

|

||||

|

||||

|

||||

# 全局数据库访问点

|

||||

db: Database = DBWrapper()

|

||||

|

|

|

|||

|

|

@ -7,7 +7,7 @@ from datetime import datetime

|

|||

from typing import Dict, List

|

||||

from loguru import logger

|

||||

from typing import Optional

|

||||

from ..common.database import Database

|

||||

|

||||

|

||||

import customtkinter as ctk

|

||||

from dotenv import load_dotenv

|

||||

|

|

@ -16,6 +16,8 @@ from dotenv import load_dotenv

|

|||

current_dir = os.path.dirname(os.path.abspath(__file__))

|

||||

# 获取项目根目录

|

||||

root_dir = os.path.abspath(os.path.join(current_dir, '..', '..'))

|

||||

sys.path.insert(0, root_dir)

|

||||

from src.common.database import db

|

||||

|

||||

# 加载环境变量

|

||||

if os.path.exists(os.path.join(root_dir, '.env.dev')):

|

||||

|

|

@ -44,28 +46,6 @@ class ReasoningGUI:

|

|||

self.root.geometry('800x600')

|

||||

self.root.protocol("WM_DELETE_WINDOW", self._on_closing)

|

||||

|

||||

# 初始化数据库连接

|

||||

try:

|

||||

self.db = Database.get_instance()

|

||||

logger.success("数据库连接成功")

|

||||

except RuntimeError:

|

||||

logger.warning("数据库未初始化,正在尝试初始化...")

|

||||

try:

|

||||

Database.initialize(

|

||||

uri=os.getenv("MONGODB_URI"),

|

||||

host=os.getenv("MONGODB_HOST", "127.0.0.1"),

|

||||

port=int(os.getenv("MONGODB_PORT", "27017")),

|

||||

db_name=os.getenv("DATABASE_NAME", "MegBot"),

|

||||

username=os.getenv("MONGODB_USERNAME"),

|

||||

password=os.getenv("MONGODB_PASSWORD"),

|

||||

auth_source=os.getenv("MONGODB_AUTH_SOURCE"),

|

||||

)

|

||||

self.db = Database.get_instance()

|

||||

logger.success("数据库初始化成功")

|

||||

except Exception:

|

||||

logger.exception("数据库初始化失败")

|

||||

sys.exit(1)

|

||||

|

||||

# 存储群组数据

|

||||

self.group_data: Dict[str, List[dict]] = {}

|

||||

|

||||

|

|

@ -264,11 +244,11 @@ class ReasoningGUI:

|

|||

logger.debug(f"查询条件: {query}")

|

||||

|

||||

# 先获取一条记录检查时间格式

|

||||

sample = self.db.reasoning_logs.find_one()

|

||||

sample = db.reasoning_logs.find_one()

|

||||

if sample:

|

||||

logger.debug(f"样本记录时间格式: {type(sample['time'])} 值: {sample['time']}")

|

||||

|

||||

cursor = self.db.reasoning_logs.find(query).sort("time", -1)

|

||||

cursor = db.reasoning_logs.find(query).sort("time", -1)

|

||||

new_data = {}

|

||||

total_count = 0

|

||||

|

||||

|

|

@ -333,17 +313,6 @@ class ReasoningGUI:

|

|||

|

||||

|

||||

def main():

|

||||

"""主函数"""

|

||||

Database.initialize(

|

||||

uri=os.getenv("MONGODB_URI"),

|

||||

host=os.getenv("MONGODB_HOST", "127.0.0.1"),

|

||||

port=int(os.getenv("MONGODB_PORT", "27017")),

|

||||

db_name=os.getenv("DATABASE_NAME", "MegBot"),

|

||||

username=os.getenv("MONGODB_USERNAME"),

|

||||

password=os.getenv("MONGODB_PASSWORD"),

|

||||

auth_source=os.getenv("MONGODB_AUTH_SOURCE"),

|

||||

)

|

||||

|

||||

app = ReasoningGUI()

|

||||

app.run()

|

||||

|

||||

|

|

|

|||

|

|

@ -3,11 +3,11 @@ import time

|

|||

import os

|

||||

|

||||

from loguru import logger

|

||||

from nonebot import get_driver, on_message, require

|

||||

from nonebot.adapters.onebot.v11 import Bot, GroupMessageEvent, Message, MessageSegment,MessageEvent

|

||||

from nonebot import get_driver, on_message, on_notice, require

|

||||

from nonebot.rule import to_me

|

||||

from nonebot.adapters.onebot.v11 import Bot, GroupMessageEvent, Message, MessageSegment, MessageEvent, NoticeEvent

|

||||

from nonebot.typing import T_State

|

||||

|

||||

from ...common.database import Database

|

||||

from ..moods.moods import MoodManager # 导入情绪管理器

|

||||

from ..schedule.schedule_generator import bot_schedule

|

||||

from ..utils.statistic import LLMStatistics

|

||||

|

|

@ -40,6 +40,8 @@ logger.debug(f"正在唤醒{global_config.BOT_NICKNAME}......")

|

|||

chat_bot = ChatBot()

|

||||

# 注册消息处理器

|

||||

msg_in = on_message(priority=5)

|

||||

# 注册和bot相关的通知处理器

|

||||

notice_matcher = on_notice(priority=1)

|

||||

# 创建定时任务

|

||||

scheduler = require("nonebot_plugin_apscheduler").scheduler

|

||||

|

||||

|

|

@ -96,19 +98,24 @@ async def _(bot: Bot, event: MessageEvent, state: T_State):

|

|||

await chat_bot.handle_message(event, bot)

|

||||

|

||||

|

||||

@notice_matcher.handle()

|

||||

async def _(bot: Bot, event: NoticeEvent, state: T_State):

|

||||

logger.debug(f"收到通知:{event}")

|

||||

await chat_bot.handle_notice(event, bot)

|

||||

|

||||

|

||||

# 添加build_memory定时任务

|

||||

@scheduler.scheduled_job("interval", seconds=global_config.build_memory_interval, id="build_memory")

|

||||

async def build_memory_task():

|

||||

"""每build_memory_interval秒执行一次记忆构建"""

|

||||

logger.debug(

|

||||

"[记忆构建]"

|

||||

"------------------------------------开始构建记忆--------------------------------------")

|

||||

logger.debug("[记忆构建]------------------------------------开始构建记忆--------------------------------------")

|

||||

start_time = time.time()

|

||||

await hippocampus.operation_build_memory(chat_size=20)

|

||||

end_time = time.time()

|

||||

logger.success(

|

||||

f"[记忆构建]--------------------------记忆构建完成:耗时: {end_time - start_time:.2f} "

|

||||

"秒-------------------------------------------")

|

||||

"秒-------------------------------------------"

|

||||

)

|

||||

|

||||

|

||||

@scheduler.scheduled_job("interval", seconds=global_config.forget_memory_interval, id="forget_memory")

|

||||

|

|

@ -132,3 +139,12 @@ async def print_mood_task():

|

|||

"""每30秒打印一次情绪状态"""

|

||||

mood_manager = MoodManager.get_instance()

|

||||

mood_manager.print_mood_status()

|

||||

|

||||

|

||||

@scheduler.scheduled_job("interval", seconds=7200, id="generate_schedule")

|

||||

async def generate_schedule_task():

|

||||

"""每2小时尝试生成一次日程"""

|

||||

logger.debug("尝试生成日程")

|

||||

await bot_schedule.initialize()

|

||||

if not bot_schedule.enable_output:

|

||||

bot_schedule.print_schedule()

|

||||

|

|

|

|||

|

|

@ -7,6 +7,8 @@ from nonebot.adapters.onebot.v11 import (

|

|||

GroupMessageEvent,

|

||||

MessageEvent,

|

||||

PrivateMessageEvent,

|

||||

NoticeEvent,

|

||||

PokeNotifyEvent,

|

||||

)

|

||||

|

||||

from ..memory_system.memory import hippocampus

|

||||

|

|

@ -25,6 +27,7 @@ from .relationship_manager import relationship_manager

|

|||

from .storage import MessageStorage

|

||||

from .utils import calculate_typing_time, is_mentioned_bot_in_message

|

||||

from .utils_image import image_path_to_base64

|

||||

from .utils_user import get_user_nickname, get_user_cardname, get_groupname

|

||||

from .willing_manager import willing_manager # 导入意愿管理器

|

||||

from .message_base import UserInfo, GroupInfo, Seg

|

||||

|

||||

|

|

@ -46,6 +49,69 @@ class ChatBot:

|

|||

if not self._started:

|

||||

self._started = True

|

||||

|

||||

async def handle_notice(self, event: NoticeEvent, bot: Bot) -> None:

|

||||

"""处理收到的通知"""

|

||||

# 戳一戳通知

|

||||

if isinstance(event, PokeNotifyEvent):

|

||||

# 用户屏蔽,不区分私聊/群聊

|

||||

if event.user_id in global_config.ban_user_id:

|

||||

return

|

||||

reply_poke_probability = 1 # 回复戳一戳的概率

|

||||

|

||||

if random() < reply_poke_probability:

|

||||

user_info = UserInfo(

|

||||

user_id=event.user_id,

|

||||

user_nickname=get_user_nickname(event.user_id) or None,

|

||||

user_cardname=get_user_cardname(event.user_id) or None,

|

||||

platform="qq",

|

||||

)

|

||||

group_info = GroupInfo(group_id=event.group_id, group_name=None, platform="qq")

|

||||

message_cq = MessageRecvCQ(

|

||||

message_id=None,

|

||||

user_info=user_info,

|

||||

raw_message=str("[戳了戳]你"),

|

||||

group_info=group_info,

|

||||

reply_message=None,

|

||||

platform="qq",

|

||||

)

|

||||

message_json = message_cq.to_dict()

|

||||

|

||||

# 进入maimbot

|

||||

message = MessageRecv(message_json)

|

||||

groupinfo = message.message_info.group_info

|

||||

userinfo = message.message_info.user_info

|

||||

messageinfo = message.message_info

|

||||

|

||||

chat = await chat_manager.get_or_create_stream(

|

||||

platform=messageinfo.platform, user_info=userinfo, group_info=groupinfo

|

||||

)

|

||||

message.update_chat_stream(chat)

|

||||

await message.process()

|

||||

|

||||

bot_user_info = UserInfo(

|

||||

user_id=global_config.BOT_QQ,

|

||||

user_nickname=global_config.BOT_NICKNAME,

|

||||

platform=messageinfo.platform,

|

||||

)

|

||||

|

||||

response, raw_content = await self.gpt.generate_response(message)

|

||||

|

||||

if response:

|

||||

for msg in response:

|

||||

message_segment = Seg(type="text", data=msg)

|

||||

|

||||

bot_message = MessageSending(

|

||||

message_id=None,

|

||||

chat_stream=chat,

|

||||

bot_user_info=bot_user_info,

|

||||

sender_info=userinfo,

|

||||

message_segment=message_segment,

|

||||

reply=None,

|

||||

is_head=False,

|

||||

is_emoji=False,

|

||||

)

|

||||

message_manager.add_message(bot_message)

|

||||

|

||||

async def handle_message(self, event: MessageEvent, bot: Bot) -> None:

|

||||

"""处理收到的消息"""

|

||||

|

||||

|

|

@ -54,7 +120,10 @@ class ChatBot:

|

|||

# 用户屏蔽,不区分私聊/群聊

|

||||

if event.user_id in global_config.ban_user_id:

|

||||

return

|

||||

|

||||

|

||||

if event.reply and hasattr(event.reply, 'sender') and hasattr(event.reply.sender, 'user_id') and event.reply.sender.user_id in global_config.ban_user_id:

|

||||

logger.debug(f"跳过处理回复来自被ban用户 {event.reply.sender.user_id} 的消息")

|

||||

return

|

||||

# 处理私聊消息

|

||||

if isinstance(event, PrivateMessageEvent):

|

||||

if not global_config.enable_friend_chat: # 私聊过滤

|

||||

|

|

@ -126,7 +195,7 @@ class ChatBot:

|

|||

for word in global_config.ban_words:

|

||||

if word in message.processed_plain_text:

|

||||

logger.info(

|

||||

f"[{chat.group_info.group_name if chat.group_info.group_id else '私聊'}]{userinfo.user_nickname}:{message.processed_plain_text}"

|

||||

f"[{chat.group_info.group_name if chat.group_info else '私聊'}]{userinfo.user_nickname}:{message.processed_plain_text}"

|

||||

)

|

||||

logger.info(f"[过滤词识别]消息中含有{word},filtered")

|

||||

return

|

||||

|

|

@ -135,7 +204,7 @@ class ChatBot:

|

|||

for pattern in global_config.ban_msgs_regex:

|

||||

if re.search(pattern, message.raw_message):

|

||||

logger.info(

|

||||

f"[{chat.group_info.group_name if chat.group_info.group_id else '私聊'}]{message.user_nickname}:{message.raw_message}"

|

||||

f"[{chat.group_info.group_name if chat.group_info else '私聊'}]{userinfo.user_nickname}:{message.raw_message}"

|

||||

)

|

||||

logger.info(f"[正则表达式过滤]消息匹配到{pattern},filtered")

|

||||

return

|

||||

|

|

@ -143,7 +212,7 @@ class ChatBot:

|

|||

current_time = time.strftime("%Y-%m-%d %H:%M:%S", time.localtime(messageinfo.time))

|

||||

|

||||

# topic=await topic_identifier.identify_topic_llm(message.processed_plain_text)

|

||||

|

||||

|

||||

topic = ""

|

||||

interested_rate = await hippocampus.memory_activate_value(message.processed_plain_text) / 100

|

||||

logger.debug(f"对{message.processed_plain_text}的激活度:{interested_rate}")

|

||||

|

|

@ -164,7 +233,7 @@ class ChatBot:

|

|||

current_willing = willing_manager.get_willing(chat_stream=chat)

|

||||

|

||||

logger.info(

|

||||

f"[{current_time}][{chat.group_info.group_name if chat.group_info.group_id else '私聊'}]{chat.user_info.user_nickname}:"

|

||||

f"[{current_time}][{chat.group_info.group_name if chat.group_info else '私聊'}]{chat.user_info.user_nickname}:"

|

||||

f"{message.processed_plain_text}[回复意愿:{current_willing:.2f}][概率:{reply_probability * 100:.1f}%]"

|

||||

)

|

||||

|

||||

|

|

|

|||

|

|

@ -6,7 +6,7 @@ from typing import Dict, Optional

|

|||

|

||||

from loguru import logger

|

||||

|

||||

from ...common.database import Database

|

||||

from ...common.database import db

|

||||

from .message_base import GroupInfo, UserInfo

|

||||

|

||||

|

||||

|

|

@ -83,7 +83,6 @@ class ChatManager:

|

|||

def __init__(self):

|

||||

if not self._initialized:

|

||||

self.streams: Dict[str, ChatStream] = {} # stream_id -> ChatStream

|

||||

self.db = Database.get_instance()

|

||||

self._ensure_collection()

|

||||

self._initialized = True

|

||||

# 在事件循环中启动初始化

|

||||

|

|

@ -111,11 +110,11 @@ class ChatManager:

|

|||

|

||||

def _ensure_collection(self):

|

||||

"""确保数据库集合存在并创建索引"""

|

||||

if "chat_streams" not in self.db.list_collection_names():

|

||||

self.db.create_collection("chat_streams")

|

||||

if "chat_streams" not in db.list_collection_names():

|

||||

db.create_collection("chat_streams")

|

||||

# 创建索引

|

||||

self.db.chat_streams.create_index([("stream_id", 1)], unique=True)

|

||||

self.db.chat_streams.create_index(

|

||||

db.chat_streams.create_index([("stream_id", 1)], unique=True)

|

||||

db.chat_streams.create_index(

|

||||

[("platform", 1), ("user_info.user_id", 1), ("group_info.group_id", 1)]

|

||||

)

|

||||

|

||||

|

|

@ -168,7 +167,7 @@ class ChatManager:

|

|||

return stream

|

||||

|

||||

# 检查数据库中是否存在

|

||||

data = self.db.chat_streams.find_one({"stream_id": stream_id})

|

||||

data = db.chat_streams.find_one({"stream_id": stream_id})

|

||||

if data:

|

||||

stream = ChatStream.from_dict(data)

|

||||

# 更新用户信息和群组信息

|

||||

|

|

@ -204,7 +203,7 @@ class ChatManager:

|

|||

async def _save_stream(self, stream: ChatStream):

|

||||

"""保存聊天流到数据库"""

|

||||

if not stream.saved:

|

||||

self.db.chat_streams.update_one(

|

||||

db.chat_streams.update_one(

|

||||

{"stream_id": stream.stream_id}, {"$set": stream.to_dict()}, upsert=True

|

||||

)

|

||||

stream.saved = True

|

||||

|

|

@ -216,7 +215,7 @@ class ChatManager:

|

|||

|

||||

async def load_all_streams(self):

|

||||

"""从数据库加载所有聊天流"""

|

||||

all_streams = self.db.chat_streams.find({})

|

||||

all_streams = db.chat_streams.find({})

|

||||

for data in all_streams:

|

||||

stream = ChatStream.from_dict(data)

|

||||

self.streams[stream.stream_id] = stream

|

||||

|

|

|

|||

|

|

@ -86,9 +86,12 @@ class CQCode:

|

|||

else:

|

||||

self.translated_segments = Seg(type="text", data="[图片]")

|

||||

elif self.type == "at":

|

||||

user_nickname = get_user_nickname(self.params.get("qq", ""))

|

||||

self.translated_segments = Seg(

|

||||

type="text", data=f"[@{user_nickname or '某人'}]"

|

||||

if self.params.get("qq") == "all":

|

||||

self.translated_segments = Seg(type="text", data="@[全体成员]")

|

||||

else:

|

||||

user_nickname = get_user_nickname(self.params.get("qq", ""))

|

||||

self.translated_segments = Seg(

|

||||

type="text", data=f"[@{user_nickname or '某人'}]"

|

||||

)

|

||||

elif self.type == "reply":

|

||||

reply_segments = self.translate_reply()

|

||||

|

|

|

|||

|

|

@ -12,7 +12,7 @@ import io

|

|||

from loguru import logger

|

||||

from nonebot import get_driver

|

||||

|

||||

from ...common.database import Database

|

||||

from ...common.database import db

|

||||

from ..chat.config import global_config

|

||||

from ..chat.utils import get_embedding

|

||||

from ..chat.utils_image import ImageManager, image_path_to_base64

|

||||

|

|

@ -25,22 +25,20 @@ image_manager = ImageManager()

|

|||

|

||||

class EmojiManager:

|

||||

_instance = None

|

||||

EMOJI_DIR = "data/emoji" # 表情包存储目录

|

||||

EMOJI_DIR = os.path.join("data", "emoji") # 表情包存储目录

|

||||

|

||||

def __new__(cls):

|

||||

if cls._instance is None:

|

||||

cls._instance = super().__new__(cls)

|

||||

cls._instance.db = None

|

||||

cls._instance._initialized = False

|

||||

return cls._instance

|

||||

|

||||

def __init__(self):

|

||||

self.db = Database.get_instance()

|

||||

self._scan_task = None

|

||||

self.vlm = LLM_request(model=global_config.vlm, temperature=0.3, max_tokens=1000)

|

||||

self.llm_emotion_judge = LLM_request(model=global_config.llm_emotion_judge, max_tokens=60,

|

||||

temperature=0.8) # 更高的温度,更少的token(后续可以根据情绪来调整温度)

|

||||

|

||||

self.llm_emotion_judge = LLM_request(

|

||||

model=global_config.llm_emotion_judge, max_tokens=60, temperature=0.8

|

||||

) # 更高的温度,更少的token(后续可以根据情绪来调整温度)

|

||||

|

||||

def _ensure_emoji_dir(self):

|

||||

"""确保表情存储目录存在"""

|

||||

|

|

@ -50,7 +48,6 @@ class EmojiManager:

|

|||

"""初始化数据库连接和表情目录"""

|

||||

if not self._initialized:

|

||||

try:

|

||||

self.db = Database.get_instance()

|

||||

self._ensure_emoji_collection()

|

||||

self._ensure_emoji_dir()

|

||||

self._initialized = True

|

||||

|

|

@ -68,42 +65,39 @@ class EmojiManager:

|

|||

|

||||

def _ensure_emoji_collection(self):

|

||||

"""确保emoji集合存在并创建索引

|

||||

|

||||

|

||||

这个函数用于确保MongoDB数据库中存在emoji集合,并创建必要的索引。

|

||||

|

||||

|

||||

索引的作用是加快数据库查询速度:

|

||||

- embedding字段的2dsphere索引: 用于加速向量相似度搜索,帮助快速找到相似的表情包

|

||||

- tags字段的普通索引: 加快按标签搜索表情包的速度

|

||||

- filename字段的唯一索引: 确保文件名不重复,同时加快按文件名查找的速度

|

||||

|

||||

|

||||

没有索引的话,数据库每次查询都需要扫描全部数据,建立索引后可以大大提高查询效率。

|

||||

"""

|

||||

if 'emoji' not in self.db.list_collection_names():

|

||||

self.db.create_collection('emoji')

|

||||

self.db.emoji.create_index([('embedding', '2dsphere')])

|

||||

self.db.emoji.create_index([('filename', 1)], unique=True)

|

||||

if "emoji" not in db.list_collection_names():

|

||||

db.create_collection("emoji")

|

||||

db.emoji.create_index([("embedding", "2dsphere")])

|

||||

db.emoji.create_index([("filename", 1)], unique=True)

|

||||

|

||||

def record_usage(self, emoji_id: str):

|

||||

"""记录表情使用次数"""

|

||||

try:

|

||||

self._ensure_db()

|

||||

self.db.emoji.update_one(

|

||||

{'_id': emoji_id},

|

||||

{'$inc': {'usage_count': 1}}

|

||||

)

|

||||

db.emoji.update_one({"_id": emoji_id}, {"$inc": {"usage_count": 1}})

|

||||

except Exception as e:

|

||||

logger.error(f"记录表情使用失败: {str(e)}")

|

||||

|

||||

async def get_emoji_for_text(self, text: str) -> Optional[Tuple[str,str]]:

|

||||

|

||||

async def get_emoji_for_text(self, text: str) -> Optional[Tuple[str, str]]:

|

||||

"""根据文本内容获取相关表情包

|

||||

Args:

|

||||

text: 输入文本

|

||||

Returns:

|

||||

Optional[str]: 表情包文件路径,如果没有找到则返回None

|

||||

|

||||

|

||||

|

||||

|

||||

可不可以通过 配置文件中的指令 来自定义使用表情包的逻辑?

|

||||

我觉得可行

|

||||

我觉得可行

|

||||

|

||||

"""

|

||||

try:

|

||||

|

|

@ -121,7 +115,7 @@ class EmojiManager:

|

|||

|

||||

try:

|

||||

# 获取所有表情包

|

||||

all_emojis = list(self.db.emoji.find({}, {'_id': 1, 'path': 1, 'embedding': 1, 'description': 1}))

|

||||

all_emojis = list(db.emoji.find({}, {"_id": 1, "path": 1, "embedding": 1, "description": 1}))

|

||||

|

||||

if not all_emojis:

|

||||

logger.warning("数据库中没有任何表情包")

|

||||

|

|

@ -140,34 +134,31 @@ class EmojiManager:

|

|||

|

||||

# 计算所有表情包与输入文本的相似度

|

||||

emoji_similarities = [

|

||||

(emoji, cosine_similarity(text_embedding, emoji.get('embedding', [])))

|

||||

for emoji in all_emojis

|

||||

(emoji, cosine_similarity(text_embedding, emoji.get("embedding", []))) for emoji in all_emojis

|

||||

]

|

||||

|

||||

# 按相似度降序排序

|

||||

emoji_similarities.sort(key=lambda x: x[1], reverse=True)

|

||||

|

||||

# 获取前3个最相似的表情包

|

||||

top_10_emojis = emoji_similarities[:10 if len(emoji_similarities) > 10 else len(emoji_similarities)]

|

||||

|

||||

top_10_emojis = emoji_similarities[: 10 if len(emoji_similarities) > 10 else len(emoji_similarities)]

|

||||

|

||||

if not top_10_emojis:

|

||||

logger.warning("未找到匹配的表情包")

|

||||

return None

|

||||

|

||||

# 从前3个中随机选择一个

|

||||

selected_emoji, similarity = random.choice(top_10_emojis)

|

||||

|

||||

if selected_emoji and 'path' in selected_emoji:

|

||||

|

||||

if selected_emoji and "path" in selected_emoji:

|

||||

# 更新使用次数

|

||||

self.db.emoji.update_one(

|

||||

{'_id': selected_emoji['_id']},

|

||||

{'$inc': {'usage_count': 1}}

|

||||

)

|

||||

db.emoji.update_one({"_id": selected_emoji["_id"]}, {"$inc": {"usage_count": 1}})

|

||||

|

||||

logger.success(

|

||||

f"找到匹配的表情包: {selected_emoji.get('description', '无描述')} (相似度: {similarity:.4f})")

|

||||

f"找到匹配的表情包: {selected_emoji.get('description', '无描述')} (相似度: {similarity:.4f})"

|

||||

)

|

||||

# 稍微改一下文本描述,不然容易产生幻觉,描述已经包含 表情包 了

|

||||

return selected_emoji['path'], "[ %s ]" % selected_emoji.get('description', '无描述')

|

||||

return selected_emoji["path"], "[ %s ]" % selected_emoji.get("description", "无描述")

|

||||

|

||||

except Exception as search_error:

|

||||

logger.error(f"搜索表情包失败: {str(search_error)}")

|

||||

|

|

@ -179,7 +170,6 @@ class EmojiManager:

|

|||

logger.error(f"获取表情包失败: {str(e)}")

|

||||

return None

|

||||

|

||||

|

||||

async def _get_emoji_discription(self, image_base64: str) -> str:

|

||||

"""获取表情包的标签,使用image_manager的描述生成功能"""

|

||||

|

||||

|

|

@ -187,16 +177,16 @@ class EmojiManager:

|

|||

# 使用image_manager获取描述,去掉前后的方括号和"表情包:"前缀

|

||||

description = await image_manager.get_emoji_description(image_base64)

|

||||

# 去掉[表情包:xxx]的格式,只保留描述内容

|

||||

description = description.strip('[]').replace('表情包:', '')

|

||||

description = description.strip("[]").replace("表情包:", "")

|

||||

return description

|

||||

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"获取标签失败: {str(e)}")

|

||||

return None

|

||||

|

||||

async def _check_emoji(self, image_base64: str, image_format: str) -> str:

|

||||

try:

|

||||

prompt = f'这是一个表情包,请回答这个表情包是否满足\"{global_config.EMOJI_CHECK_PROMPT}\"的要求,是则回答是,否则回答否,不要出现任何其他内容'

|

||||

prompt = f'这是一个表情包,请回答这个表情包是否满足"{global_config.EMOJI_CHECK_PROMPT}"的要求,是则回答是,否则回答否,不要出现任何其他内容'

|

||||

|

||||

content, _ = await self.vlm.generate_response_for_image(prompt, image_base64, image_format)

|

||||

logger.debug(f"输出描述: {content}")

|

||||

|

|

@ -208,9 +198,9 @@ class EmojiManager:

|

|||

|

||||

async def _get_kimoji_for_text(self, text: str):

|

||||

try:

|

||||

prompt = f'这是{global_config.BOT_NICKNAME}将要发送的消息内容:\n{text}\n若要为其配上表情包,请你输出这个表情包应该表达怎样的情感,应该给人什么样的感觉,不要太简洁也不要太长,注意不要输出任何对消息内容的分析内容,只输出\"一种什么样的感觉\"中间的形容词部分。'

|

||||

prompt = f'这是{global_config.BOT_NICKNAME}将要发送的消息内容:\n{text}\n若要为其配上表情包,请你输出这个表情包应该表达怎样的情感,应该给人什么样的感觉,不要太简洁也不要太长,注意不要输出任何对消息内容的分析内容,只输出"一种什么样的感觉"中间的形容词部分。'

|

||||

|

||||

content, _ = await self.llm_emotion_judge.generate_response_async(prompt,temperature=1.5)

|

||||

content, _ = await self.llm_emotion_judge.generate_response_async(prompt, temperature=1.5)

|

||||

logger.info(f"输出描述: {content}")

|

||||

return content

|

||||

|

||||

|

|

@ -221,67 +211,62 @@ class EmojiManager:

|

|||

async def scan_new_emojis(self):

|

||||

"""扫描新的表情包"""

|

||||

try:

|

||||

emoji_dir = "data/emoji"

|

||||

emoji_dir = self.EMOJI_DIR

|

||||

os.makedirs(emoji_dir, exist_ok=True)

|

||||

|

||||

# 获取所有支持的图片文件

|

||||

files_to_process = [f for f in os.listdir(emoji_dir) if

|

||||

f.lower().endswith(('.jpg', '.jpeg', '.png', '.gif'))]

|

||||

files_to_process = [

|

||||

f for f in os.listdir(emoji_dir) if f.lower().endswith((".jpg", ".jpeg", ".png", ".gif"))

|

||||

]

|

||||

|

||||

for filename in files_to_process:

|

||||

image_path = os.path.join(emoji_dir, filename)

|

||||

|

||||

|

||||

# 获取图片的base64编码和哈希值

|

||||

image_base64 = image_path_to_base64(image_path)

|

||||

if image_base64 is None:

|

||||

os.remove(image_path)

|

||||

continue

|

||||

|

||||

|

||||

image_bytes = base64.b64decode(image_base64)

|

||||

image_hash = hashlib.md5(image_bytes).hexdigest()

|

||||

image_format = Image.open(io.BytesIO(image_bytes)).format.lower()

|

||||

# 检查是否已经注册过

|

||||

existing_emoji = self.db['emoji'].find_one({'filename': filename})

|

||||

existing_emoji = db["emoji"].find_one({"hash": image_hash})

|

||||

description = None

|

||||

|

||||

|

||||

if existing_emoji:

|

||||

# 即使表情包已存在,也检查是否需要同步到images集合

|

||||

description = existing_emoji.get('discription')

|

||||

description = existing_emoji.get("discription")

|

||||

# 检查是否在images集合中存在

|

||||

existing_image = image_manager.db.images.find_one({'hash': image_hash})

|

||||

existing_image = db.images.find_one({"hash": image_hash})

|

||||

if not existing_image:

|

||||

# 同步到images集合

|

||||

image_doc = {

|

||||

'hash': image_hash,

|

||||

'path': image_path,

|

||||

'type': 'emoji',

|

||||

'description': description,

|

||||

'timestamp': int(time.time())

|

||||

"hash": image_hash,

|

||||

"path": image_path,

|

||||

"type": "emoji",

|

||||

"description": description,

|

||||

"timestamp": int(time.time()),

|

||||

}

|

||||

image_manager.db.images.update_one(

|

||||

{'hash': image_hash},

|

||||

{'$set': image_doc},

|

||||

upsert=True

|

||||

)

|

||||

db.images.update_one({"hash": image_hash}, {"$set": image_doc}, upsert=True)

|

||||

# 保存描述到image_descriptions集合

|

||||

image_manager._save_description_to_db(image_hash, description, 'emoji')

|

||||

image_manager._save_description_to_db(image_hash, description, "emoji")

|

||||

logger.success(f"同步已存在的表情包到images集合: {filename}")

|

||||

continue

|

||||

|

||||

|

||||

# 检查是否在images集合中已有描述

|

||||

existing_description = image_manager._get_description_from_db(image_hash, 'emoji')

|

||||

|

||||

existing_description = image_manager._get_description_from_db(image_hash, "emoji")

|

||||

|

||||

if existing_description:

|

||||

description = existing_description

|

||||

else:

|

||||

# 获取表情包的描述

|

||||

description = await self._get_emoji_discription(image_base64)

|

||||

|

||||

|

||||

|

||||

if global_config.EMOJI_CHECK:

|

||||

check = await self._check_emoji(image_base64, image_format)

|

||||

if '是' not in check:

|

||||

if "是" not in check:

|

||||

os.remove(image_path)

|

||||

logger.info(f"描述: {description}")

|

||||

|

||||

|

|

@ -289,44 +274,39 @@ class EmojiManager:

|

|||

logger.info(f"其不满足过滤规则,被剔除 {check}")

|

||||

continue

|

||||

logger.info(f"check通过 {check}")

|

||||

|

||||

|

||||

if description is not None:

|

||||

embedding = await get_embedding(description)

|

||||

|

||||

|

||||

if description is not None:

|

||||

embedding = await get_embedding(description)

|

||||

|

||||

# 准备数据库记录

|

||||

emoji_record = {

|

||||

'filename': filename,

|

||||

'path': image_path,

|

||||

'embedding': embedding,

|

||||

'discription': description,

|

||||

'hash': image_hash,

|

||||

'timestamp': int(time.time())

|

||||

"filename": filename,

|

||||

"path": image_path,

|

||||

"embedding": embedding,

|

||||

"discription": description,

|

||||

"hash": image_hash,

|

||||

"timestamp": int(time.time()),

|

||||

}

|

||||

|

||||

|

||||

# 保存到emoji数据库

|

||||

self.db['emoji'].insert_one(emoji_record)

|

||||

db["emoji"].insert_one(emoji_record)

|

||||

logger.success(f"注册新表情包: {filename}")

|

||||

logger.info(f"描述: {description}")

|

||||

|

||||

|

||||

# 保存到images数据库

|

||||

image_doc = {

|

||||

'hash': image_hash,

|

||||

'path': image_path,

|

||||

'type': 'emoji',

|

||||

'description': description,

|

||||

'timestamp': int(time.time())

|

||||

"hash": image_hash,

|

||||

"path": image_path,

|

||||

"type": "emoji",

|

||||

"description": description,

|

||||

"timestamp": int(time.time()),

|

||||

}

|

||||

image_manager.db.images.update_one(

|

||||

{'hash': image_hash},

|

||||

{'$set': image_doc},

|

||||

upsert=True

|

||||

)

|

||||

db.images.update_one({"hash": image_hash}, {"$set": image_doc}, upsert=True)

|

||||

# 保存描述到image_descriptions集合

|

||||

image_manager._save_description_to_db(image_hash, description, 'emoji')

|

||||

image_manager._save_description_to_db(image_hash, description, "emoji")

|

||||

logger.success(f"同步保存到images集合: {filename}")

|

||||

else:

|

||||

logger.warning(f"跳过表情包: {filename}")

|

||||

|

|

@ -348,40 +328,47 @@ class EmojiManager:

|

|||

try:

|

||||

self._ensure_db()

|

||||

# 获取所有表情包记录

|

||||

all_emojis = list(self.db.emoji.find())

|

||||

all_emojis = list(db.emoji.find())

|

||||

removed_count = 0

|

||||

total_count = len(all_emojis)

|

||||

|

||||

for emoji in all_emojis:

|

||||

try:

|

||||

if 'path' not in emoji:

|

||||

if "path" not in emoji:

|

||||

logger.warning(f"发现无效记录(缺少path字段),ID: {emoji.get('_id', 'unknown')}")

|

||||

self.db.emoji.delete_one({'_id': emoji['_id']})

|

||||

db.emoji.delete_one({"_id": emoji["_id"]})

|

||||

removed_count += 1

|

||||

continue

|

||||

|

||||

if 'embedding' not in emoji:

|

||||

if "embedding" not in emoji:

|

||||

logger.warning(f"发现过时记录(缺少embedding字段),ID: {emoji.get('_id', 'unknown')}")

|

||||

self.db.emoji.delete_one({'_id': emoji['_id']})

|

||||

db.emoji.delete_one({"_id": emoji["_id"]})

|

||||

removed_count += 1

|

||||

continue

|

||||

|

||||

# 检查文件是否存在

|

||||

if not os.path.exists(emoji['path']):

|

||||

if not os.path.exists(emoji["path"]):

|

||||

logger.warning(f"表情包文件已被删除: {emoji['path']}")

|

||||

# 从数据库中删除记录

|

||||

result = self.db.emoji.delete_one({'_id': emoji['_id']})

|

||||

result = db.emoji.delete_one({"_id": emoji["_id"]})

|

||||

if result.deleted_count > 0:

|

||||

logger.debug(f"成功删除数据库记录: {emoji['_id']}")

|

||||

removed_count += 1

|

||||

else:

|

||||

logger.error(f"删除数据库记录失败: {emoji['_id']}")

|

||||

continue

|

||||

|

||||

if "hash" not in emoji:

|

||||

logger.warning(f"发现缺失记录(缺少hash字段),ID: {emoji.get('_id', 'unknown')}")

|

||||

hash = hashlib.md5(open(emoji["path"], "rb").read()).hexdigest()

|

||||

db.emoji.update_one({"_id": emoji["_id"]}, {"$set": {"hash": hash}})

|

||||

|

||||

except Exception as item_error:

|

||||

logger.error(f"处理表情包记录时出错: {str(item_error)}")

|

||||

continue

|

||||

|

||||

# 验证清理结果

|

||||

remaining_count = self.db.emoji.count_documents({})

|

||||

remaining_count = db.emoji.count_documents({})

|

||||

if removed_count > 0:

|

||||

logger.success(f"已清理 {removed_count} 个失效的表情包记录")

|

||||

logger.info(f"清理前总数: {total_count} | 清理后总数: {remaining_count}")

|

||||

|

|

@ -401,5 +388,3 @@ class EmojiManager:

|

|||

# 创建全局单例

|

||||

|

||||

emoji_manager = EmojiManager()

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -5,7 +5,7 @@ from typing import List, Optional, Tuple, Union

|

|||

from nonebot import get_driver

|

||||

from loguru import logger

|

||||

|

||||

from ...common.database import Database

|

||||

from ...common.database import db

|

||||

from ..models.utils_model import LLM_request

|

||||

from .config import global_config

|

||||

from .message import MessageRecv, MessageThinking, Message

|

||||

|

|

@ -34,7 +34,6 @@ class ResponseGenerator:

|

|||

self.model_v25 = LLM_request(

|

||||

model=global_config.llm_normal_minor, temperature=0.7, max_tokens=1000

|

||||

)

|

||||

self.db = Database.get_instance()

|

||||

self.current_model_type = "r1" # 默认使用 R1

|

||||

|

||||

async def generate_response(

|

||||

|

|

@ -154,7 +153,7 @@ class ResponseGenerator:

|

|||

reasoning_content: str,

|

||||

):

|

||||

"""保存对话记录到数据库"""

|

||||

self.db.reasoning_logs.insert_one(

|

||||

db.reasoning_logs.insert_one(

|

||||

{

|

||||

"time": time.time(),